In a series of lectures in 2025, Peter Thiel, co-founder and chairman of the defense analytics firm Palantir, attempted to map the modern history of artificial intelligence onto a Judeo-Christian end-times posture. He employed the literary lens of the Book of Revelation, where St. John bears witness to the core end-times prophecies that define Christian cosmology. Thiel described AI developers as having “conjured up a demon in whose existence they claim not to believe,” and broadly asserted the idea of a demonic dimension to AI that if entertained will quicken the arrival of the rapture. His claim is not that AI itself will become the Antichrist, but that it is an affordance to the Antichrist’s aims to displace the “human logos” and ready the world for its end.

Amid the ongoing generative AI revolution, most people are concerned about job loss, autonomy or misinformation, but for many prominent tech titans, their chief concern has been the end of the world as we know it — either apocalyptic or utopian. Part of enduring the past three years of the “generative revolution” has been putting up with the scientists actively engineering our AI future, namely some experts in deep learning, tech CEOs, their VPs and senior AI engineers, claiming that AI will lead to the destruction of humanity — genuine, straight-faced, doomsday predictions from the makers themselves. Others claim that it will create a world where prosperity of unrecognizable proportions will be commonplace. All in all, tech leaders seem to be less concerned with the day-to-day externalities of their inventions; they’re thinking about transcendence: heaven or hell.

Why is this? One possible explanation is that Silicon Valley needs you to think of AI as something with immeasurable potential in order to sustain its otherwise wishful profit objectives. Founders and CEOs from Sam Altman to Dario Amodei have prophesied that AI will change the world in ways we can’t even fathom, and these claims appear to coincide with an urgency to deliver on equally unfathomable promises they’ve made to investors. A world that is convinced AI can put a man on Mars or solve the climate crisis is more likely to support leaving the AI industry unregulated, and a society that puts AI and the Antichrist in the same conversation is more likely to overlook the audacity of $1.4 trillion in spending commitments. One way or another, Silicon Valley leadership has to fight an uphill battle to achieve profitability, and this rhetorical strategy might afford them the economic and social power to do so.

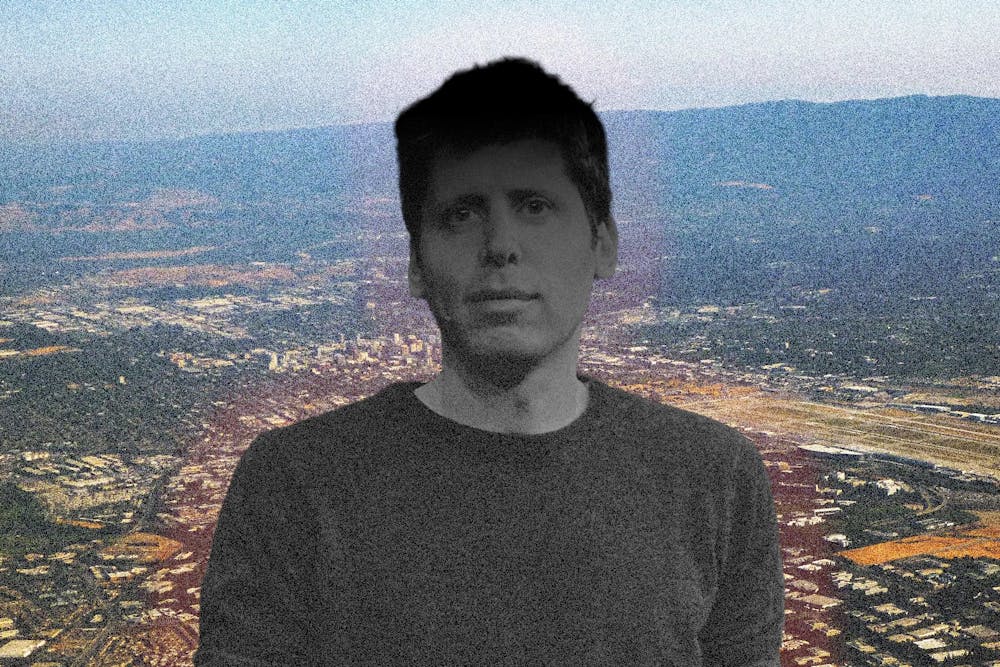

Sam Altman, co-founder and CEO of OpenAI, has made clear his transcendent beliefs around ChatGPT. In 2023, when OpenAI’s market cap was just 6% of what it is today, he spoke before the U.S. Senate, urging lawmakers to pass thoughtful regulation that would mitigate the chief risk of AI overpowering humanity. Later, in the summers of 2024 and 2025, amid a more anti-regulatory political paradigm, Altman wrote a series of manifestos where he painted a picture of a world unrecognizable to our grandparents. Alarmist or optimistic, his rhetoric casts generative AI in an otherworldly light, channeling an almost dream state when he describes in detail the digital future we’re in for: “Although it will happen incrementally, astounding triumphs – fixing the climate, establishing a space colony, and the discovery of all of physics – will eventually become commonplace.” Summing up his ambition, he says, “I believe the future is going to be so bright that no one can do it justice by trying to write about it now; a defining characteristic of the Intelligence Age will be massive prosperity.” He offers another take in a similar, less visible piece: “In the 2030s, intelligence and energy—ideas, and the ability to make ideas happen—are going to become wildly abundant.”

We’ve grown used to these promises of transcendent prosperity, even as the pendulum swings arbitrarily between optimism and pessimism. In an interview with Tucker Carlson, Elon Musk sardonically raised more pessimistic, even apocalyptic, concerns about the technology, claiming there is a “non-trivial” chance AI could cause “civilization destruction.” In late 2025, Musk doubled down, saying, “Mark my words, AI is far more dangerous than nukes… If AI has a goal and humanity just happens to be in the way, it will destroy humanity as a matter of course.” In a January 2026 essay, Anthropic CEO Dario Amodei claimed that “Humanity is about to be handed almost unimaginable power, and it is deeply unclear whether our social, political, and technological systems possess the maturity to wield it.” In a September 2025 DC summit, he more bluntly added that “there’s a 25% chance that things go really, really badly.” Bill Gates, Geoffrey Hinton, Peter Thiel and a dozen other tech titans have contributed their thoughts to this discourse.

Presently, it would be great to know how urgent or true these predictions really are, so we can pressure test the credibility of these alarmist tech titans. Technologists will debate whether or not the state of the art is really as dangerous as it’s being made out to be, but if we assume it all to be true … OK then. We give in to the pressure to trust Elon Musk and we believe AI is going to lead to extinction. Let’s do something about it then — but why is the tone of Silicon Valley so anti-regulatory? Case in point: in late 2025 Silicon Valley flooded the government with $150 million, lobbying for a ban on state-level AI regulation. They cited core themes of burdensome regulations that chill innovation in the face of a growing global techno-arms race. This anti-regulatory insistence appears to be working, as policy evidence shows the Trump administration has afforded Silicon Valley significant self-regulatory power.

Observing their behavior in the aggregate, they insist that AI has the capacity to existentially threaten humanity, yet they crucially circumvent democratic institutions — emphasizing these existential threats alongside an implication that only they hold the power to tame this powerful technology.

Why would Silicon Valley want to simultaneously convince us that (a) AI is this transcendent, transhumanist technology that could end the world, and (b) we need a hands-off-the-technology paradigm? The aim is to render AI ungovernable in the public imagination — too powerful to regulate, too important to restrain — leaving these companies free to spend, build and chase new financial highs while the question of economic recklessness goes unasked.

Some of these Silicon Valley tech titans may genuinely believe that their inventions are truly transcendent of human comprehension, and some others may not even buy their own talk. Much like their insistence on deregulation, this transcendent rhetoric is best understood as being necessary under present profit ambitions. Frustrating to investors, tech leaders have yet to point to any hard evidence of genuine economic benefits that would justify their lofty revenue projections — so what fills that void? Transcendent rhetoric that keeps investors optimistic, the news cycle fixated and users coming back. The mirage of doomsday rhetoric matches the mirage in the balance sheet.

These companies are ballooning in size off ambitious valuations from investor sentiment, then investing, loaning and trading that capital with one another — a closed-loop (likely) bubble propped up by ambitious long-term revenue potentials. Right now, investors are willing to pay around $50 (sometimes as high as $100) for every $1 in revenue that these tech titans bring in, exemplified in what many analysts see as overinflated P/E ratios. The existence and success of this entire system, trillions of dollars of market cap, depend on (a) continued optimism from Silicon Valley investors and (b) AI companies having a long-term path to realize these revenue goals.

Right now, little evidence suggests Silicon Valley is prepared to deliver. Exploring OpenAI as a prime example, along with their recent restructuring as a for-profit company, they released highly ambitious revenue goals for the coming years. According to company projections, OpenAI expects to grow revenue from $13 billion in 2025 to nearly $200 billion in 2030, with annual negative net cash flows of up to $50 billion (in 2028) attributable to data center construction. HSBC estimates that the company still won’t be profitable even into the next decade, and is short $207 billion of realizing its vision for more computing power and data centers. In essence, the company needs a business model that reconciles its ambitions for profitability and data center capacity with its underperforming top-line revenue.

For all the talk that Sam Altman does about AI ushering in an era of unfathomable prosperity, it’s curious why his most recent strategic move was to release Sora 2 with a short-form video format and social media interface. OpenAI also announced a move to make more intimate/sexually explicit content accessible to adult users and in January 2026 it announced it would begin selling ad space on the free version of the ChatGPT interface (view ironic responses from their competitor here). All of these announcements suggest an urgent strategic divergence from supposedly world-changing AI toward more monetizable products, grounded in the cultivation of user attention.

Why did Altman decide to introduce ads, restructure as a for-profit and release a hellish version of TikTok after years of prophesying an AI heaven? Because OpenAI is screwed, not the world.

On a November 2025 podcast appearance, OpenAI CEO Sam Altman responded to doubts about revenue viability with an exasperated “Enough.” Seated in front of two framed images depicting human space travel, Sam Altman appears defensive, as many comments noted. After a minute-long rant that called into question OpenAI’s revenue viability, contrasting their current $13 billion in revenue with their ambitious $1.4 trillion in spend commitments, Altman cut off the host with an exasperated “Brad, if you want to sell your shares, I’ll find you a buyer… enough.”

Perhaps the tendency to prophesy apocalypse or utopia is better understood as just being good business when under otherwise ordinary, but urgent, financial circumstances. I argue that the existential threat AI poses right now isn’t to humanity; it’s a threat to companies like OpenAI who have wagered their existence on an unsound, high-stakes “AI bet.”